How to Automate Triggers from Snowflake to Slack

TL;DR

In this blog we cover how to automate alerts from Snowflake to Slack using three methods:

- Manual Script: Write and schedule a Python script to query Snowflake and send results to Slack.

- Snowflake Functions: Use Snowflake's email notification and Slack's email integration.

- Locale Platform: Use Locale's no-code platform for easy, maintenance-free automation, connecting Snowflake to Slack with customizable alerts.

Locale eliminates the need for manual script creation, complex scheduling, and potential points of failure associated with other methods.

Automating triggers to Slack can be a valuable way to keep your team informed of important events in your database. These triggers can help business simplify their process and make them more efficient. It allows you to use your data warehouse as a central system to power other functions/departments. There can be a variety of use cases that can be solved by automating Slack triggers from Snowflake such as:

- Real-time alerts for critical database events such as sudden spikes in traffic or potential security breaches

- Automated inventory level report to biz ops teams

- Potential SLA/TAT breaches

...and many more. However, Setting up email notifications from Snowflake can be a daunting task. In this detailed guide, we’ll walk you through three straightforward methods to set up Slack alerts from Snowflake.

- Method #1: Manually through Custom Script

- Method #2: Using Internal Snowflake functions

- Method #3: Automate triggers through Locale

Method #1: Manually through Custom Script

You can create a custom Python script that uses the Snowflake connector library in Python to run a query, store the results, and send it on Slack using SMTP using a service like say AWS SES. Here is a step-by-step process to do it:

1. Install the snowflake connector

2. Run run_snowflake_query function

This function uses the snowflake connector available in Python to run a query in our snowflake warehouse and return the query results.

3. Run send_message_to_slack function

We get the results from the run_snowflake_query function and use them to trigger slack messages using the send_message_to_slack function.

Here channel will be either your channel name like “#test-messages” or a member ID for a user.

4. Schedule the script with Cron

Once you have the script ready with the above two functions, let’s add a cron to run this script on a given cron schedule

Open the cron table with crontab -e to add a new cron job. This will open the cron table in an editor. Add a new line to schedule your Python script. For example, to run it every 5 minutes, add:

Save and exit the editor to activate the new cron job. By following these steps, your Python script will now run every 5 minutes, just like a cron job.

Limitations:

- Security: Storing tokens and using them directly through a script can be harmful.

- Maintenance & Manual Effort: If there are changes in Snowflake credentials or Slack API tokens, you will need to update the script manually. It lacks a mechanism for dynamic configuration changes

- Cron Job Complexity: Scheduling the script with a cron job introduces complexity in terms of managing the schedule, especially if you need to make changes to the frequency or timing of the script execution.

- Single Point of Failure: If the server where this script is running encounters issues, it can result in message delivery failures.

Method #2: Using Internal Snowflake functions

In this method, we would use Snowflake’s Sending Email Notification functionality which can allow you to create a stored procedure to send an email to a fixed email address. Then use Slack’s Send Emails to Slack functionality to send Slack messages from the email received.

1. Generating email for Slack channel

You can send a message to a Slack channel or user using an email by using the following feature and following the steps mentioned here → Send Emails to Slack

Once you have generated the email from above, you can go ahead with the further steps.

2. Writing the Query

Once you have the above set, you need to write a transaction that runs the query, stores it in a snowflake scripting variable, and uses the stored procedure to send the results to the email, here referred to as ‘user@test.com',.

3. Automating the SQL query

Create a Stored Procedure

Firstly, encapsulate your SQL logic into a stored procedure. This is necessary because Snowflake Tasks can only invoke stored procedures. The stored procedure provides the logic that the task will execute.

Define the Task

Once the stored procedure is in place, create a Snowflake Task that calls it. Here, you specify the virtual warehouse to run the task and how often it should run, using a cron-like schedule format.

Activate the Task

Tasks are not active by default. After defining the task, you need to activate it to start the scheduled runs.

Limitations

- Limited Message Customization: Using Snowflake's Email Notification functionality to send Slack messages indirectly through email may limit your ability to customize the Slack message. Slack offers rich formatting options, attachments, and other features that may not be easily achievable through email.

- Complexity: This method involves multiple steps and configurations, including creating a stored procedure, defining a task, and activating it. This complexity can make it challenging to set up and maintain, especially for developers who are not familiar with Snowflake.

- Dependency on Snowflake: You are tightly coupling your Slack messaging system with Snowflake. If you decide to migrate away from Snowflake or use a different data warehouse, you would need to rewrite your messaging system.

- Scheduling Constraints: The scheduling of tasks is limited to Snowflake's task scheduler, which may not be as flexible or feature-rich as dedicated job scheduling systems.

Method #3: Automate triggers through Locale

Locale, no-code platform handles the heavy lifting, providing a maintenance-free, reliable, and user-friendly experience. Automating Slack triggers through locale is an easy deal, With just a few clicks you can automate getting alerts from Snowflake to Slack. Let’s see how you can automate through Locale.

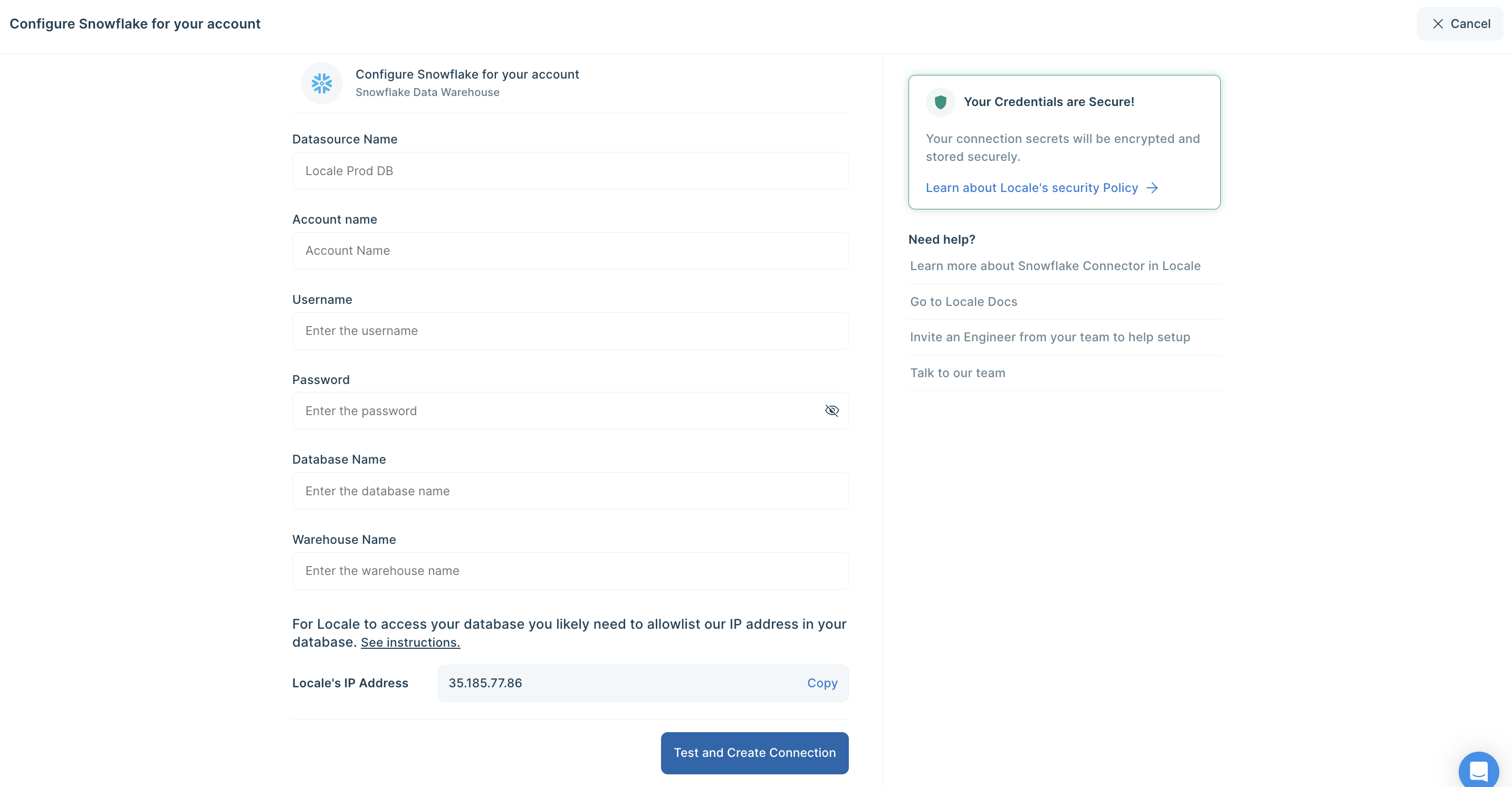

Step 1: Log in to Locale, Go to Org Setting, and Connect your Snowflake data warehouse from the data source.

Step 2: Now go to Alerts → Create New Alerts. Choose your database → Click on Setup data to be monitored.

Step 3: Write your own SQL Query and click Run to verify the data. Once you are satisfied, click Done.In the above example, if you want to get notified when sales drop below a certain threshold, you can use the below SQL script:

Step 4: Now Setup your schedule based on your requirement, this would automatically run the query based on the frequency you set. Click on Run the query at frequency and choose your schedule

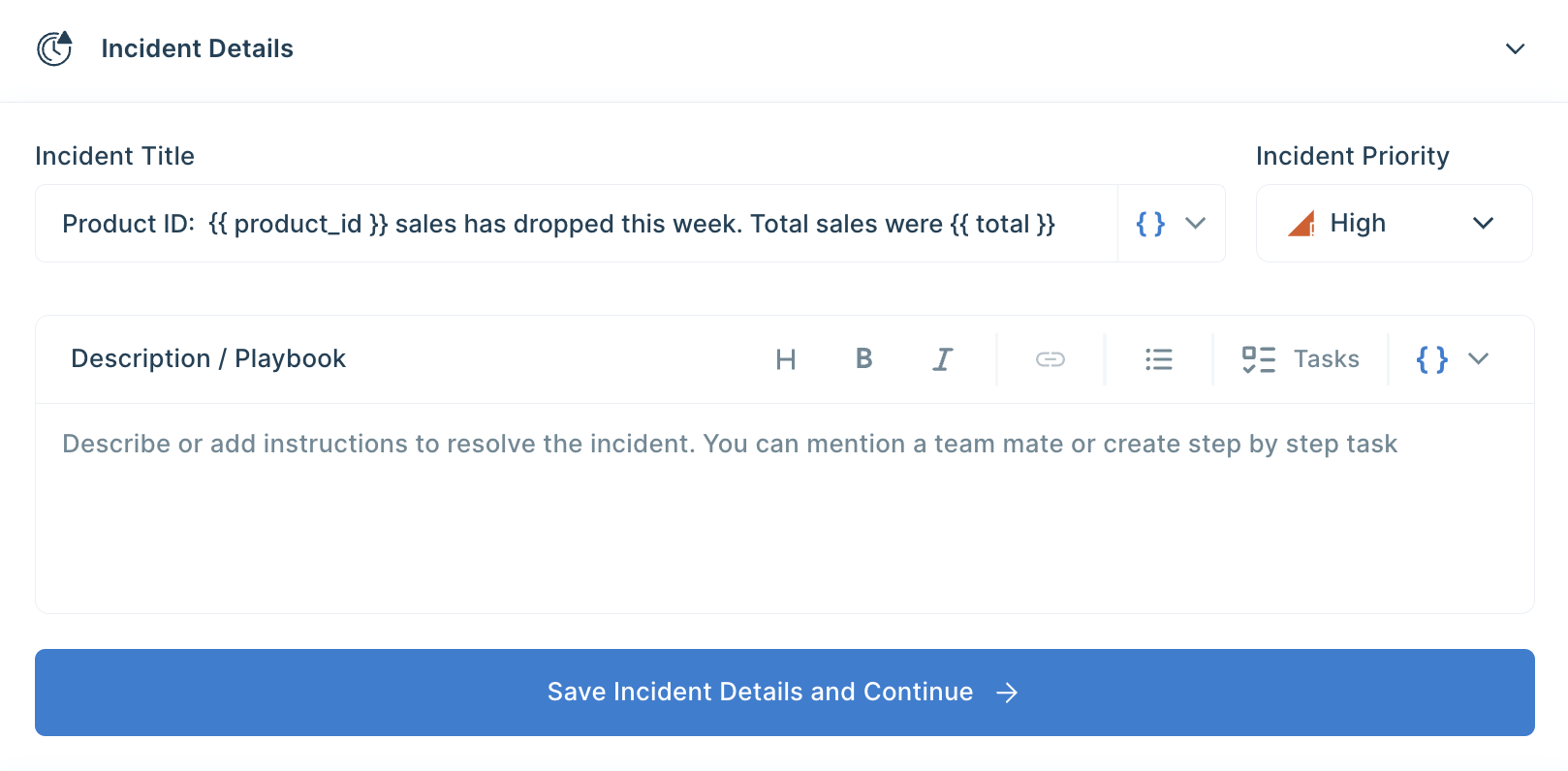

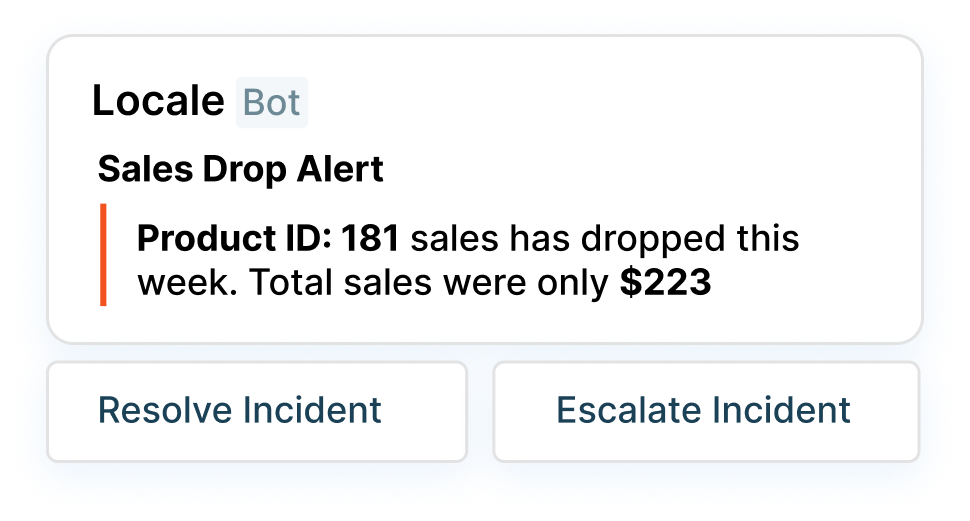

Step 5: With Locale, You can also customize the Slack message to get a better idea. To configure the Slack message, Click on Configure Incidents and enter your message in the Incident Title.

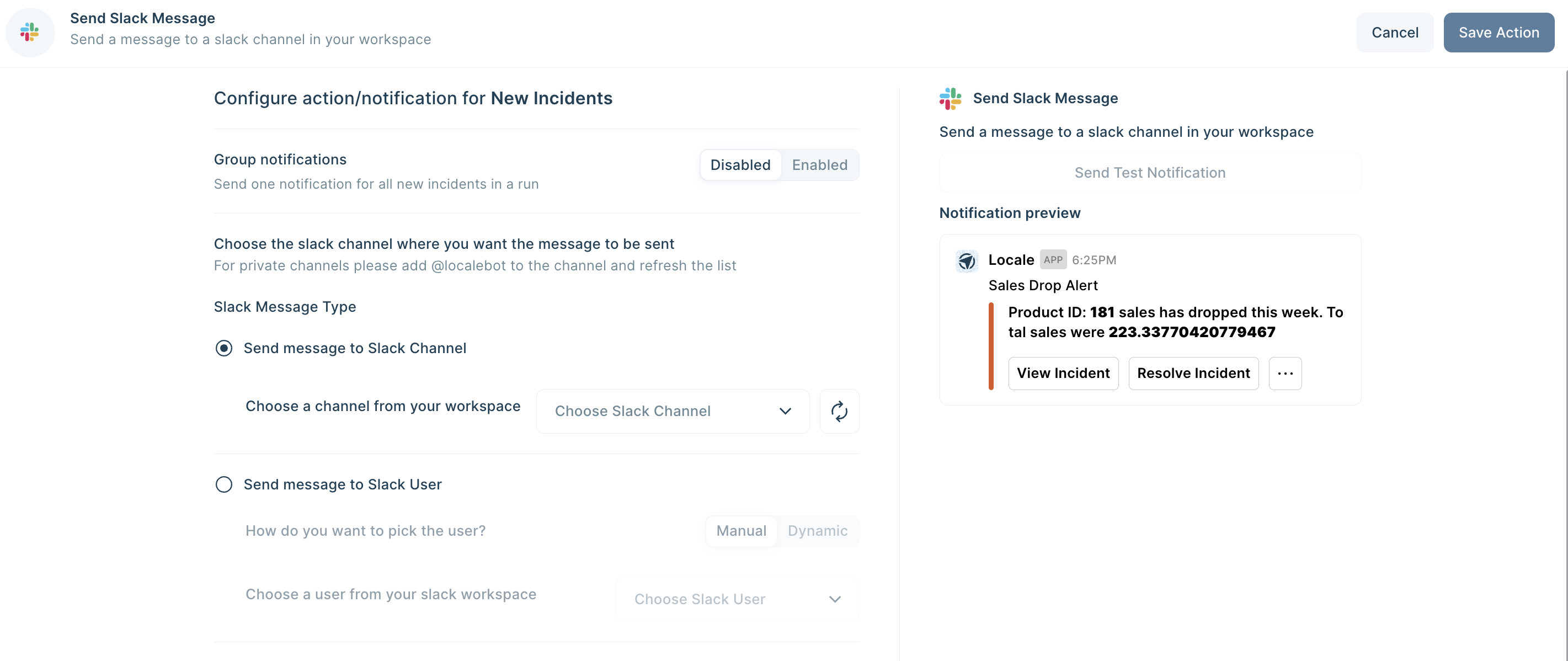

Step 6: Final Step, Set up whom or on which channel the Slack alert should be sent. Go to the Setup Notification section and click on Add Notification then select Send Slack Message. Select whether you want the Slack message to be sent to a channel or a user. You can also preview and send test notifications.

Save the alert. It will automatically run itself on the pre-set schedule and trigger a Slack notification.

Conclusion

We looked at three methods to automate triggers from Snowflake to Slack, each comes with its own set of challenges and considerations. The manual approach using custom scripts involves potential security risks, maintenance complexities, and the burden of managing cron jobs. Using internal Snowflake functions introduces limitations in message customization, complex configurations, and dependencies on Snowflake's ecosystem.

On the other hand, Locale eliminates the need for manual script creation, complex scheduling, and potential points of failure associated with other methods. The ease of connecting Snowflake to Slack, setting up alerts, and customizing messages to provides a significant advantage over traditional approaches. Moreover, Locale's automated scheduling, robust notification setup, and ability to handle dynamic configurations contribute to a more streamlined and efficient workflow. The platform's independence from server-based cron jobs reduces the risk of failures and ensures reliable message delivery. Additionally, Locale's flexibility allows users to tailor Slack messages precisely to their needs, enhancing the overall alerting experience.

Excited to get your hands dirty? Secure early access, talk to us by clicking here.