How to Automate Sending Webhooks from SQL Query Triggers

TL;DR

This guide presents two methods for automating webhooks from SQL query triggers:

- Asynchronous Webhook Calls with Python:

- Create a PostgreSQL trigger function to send notifications through a channel whenever a new row is inserted.

- Use a Python service with the psycopg2 library to listen for these notifications and send webhooks asynchronously using the aiohttp library.

- Automate Sending Webhook without code using Locale:

- Connect your database to Locale.ai.

- Set up frequency to automatically run SQL queries.

- Configure webhook triggers.

Locale.ai offers a streamlined approach to sending webhooks from SQL triggers, reducing setup complexity and maintenance efforts compared to manual methods.

Real-time notifications and updates are crucial. One common scenario is to automate triggering webhooks based on changes in a database, which could be essential for numerous applications like instant alerts, data synchronization, and workflow automation.

SQL query triggers provide a way to initiate actions when specific changes occur in a database. However, sending webhooks from SQL triggers can sometimes turn into a complex task, especially when manual configurations come into play. In this detailed guide, we’ll walk you through easy methods to Send Webhooks from SQL Triggers.

- Method #1: Asynchronous Webhook Calls with Python

- Method #2: Trigger Webhooks through Locale

Method #1: Asynchronous Webhook Calls with Python

1. Creating the Trigger in PostgreSQL:

First, we'll need to create a trigger function that gets executed whenever a specified event occurs (e.g., a new row is inserted).

Next, we create a trigger that fires the above function whenever a new row is inserted into a specified table.

2. Python Service to Listen for Triggers:

In Python, we can use a library like psycopg2 to listen to the PostgreSQL notifications.

In this method, a PostgreSQL trigger function (notify_webhook) sends a notification through a PostgreSQL channel (webhook_channel) whenever a new row is inserted into your_table. Then a Python service is set up to listen for these notifications using the psycopg2 library. Upon receiving a notification, it extracts the new row's ID from the notification payload and sends a webhook notification using the aiohttp library in an asynchronous manner to a specified webhook URL. Databases can handle huge workloads, so consuming notifications asynchronously will help us concurrently make multiple webhook calls and improve performance.

Method #2: Automate Sending Webhook without code

Locale is a no-code platform which significantly simplifies the process of sending webhooks from SQL triggers. With just a few clicks, you can automate the process of sending preconfigured or custom webhooks without the need for additional scripts or external monitoring tools.

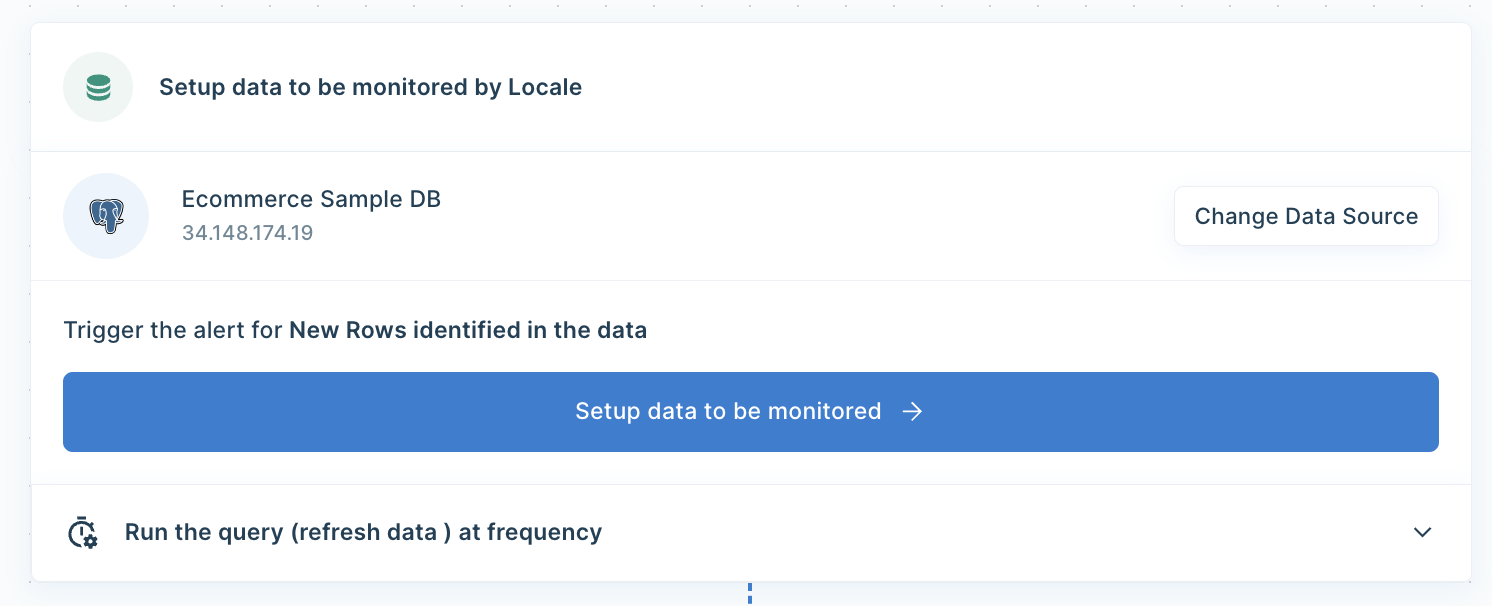

Login to Locale, Go to Org Setting and Connect your database from the data source. Now go to Alerts → Create New Alerts. Choose your database → Click on Setup data to be monitored.

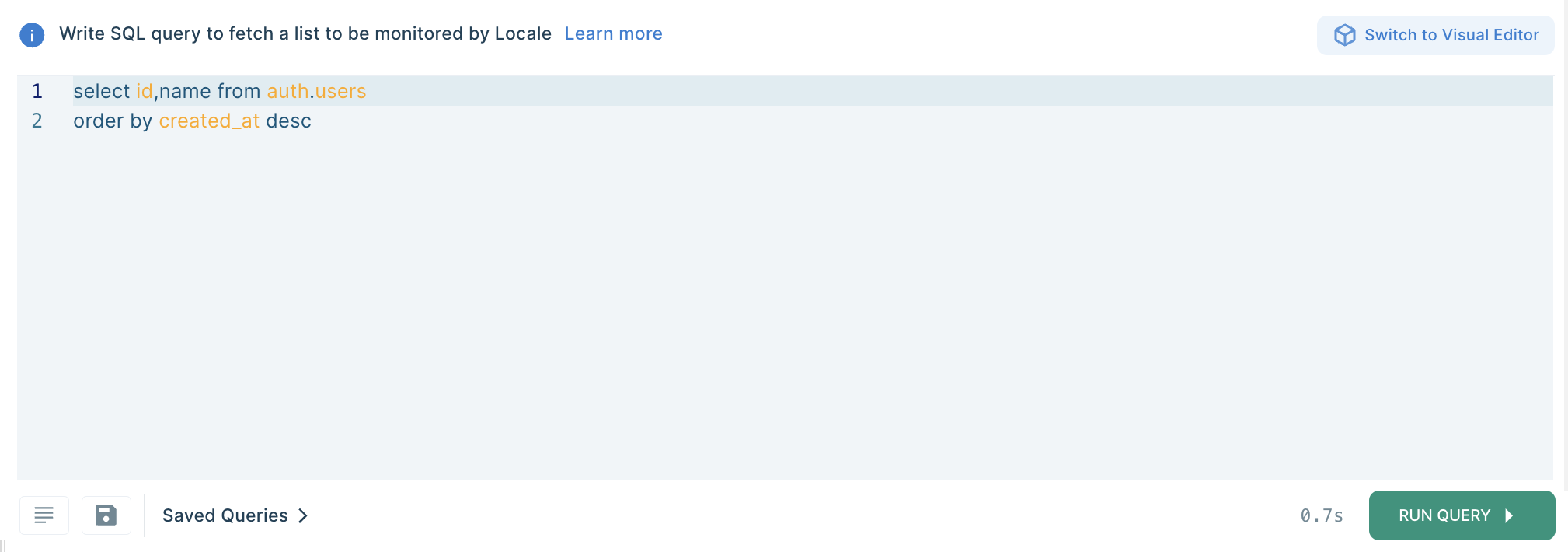

Write SQL Query to set the alert condition that will trigger webhook when the query results match:

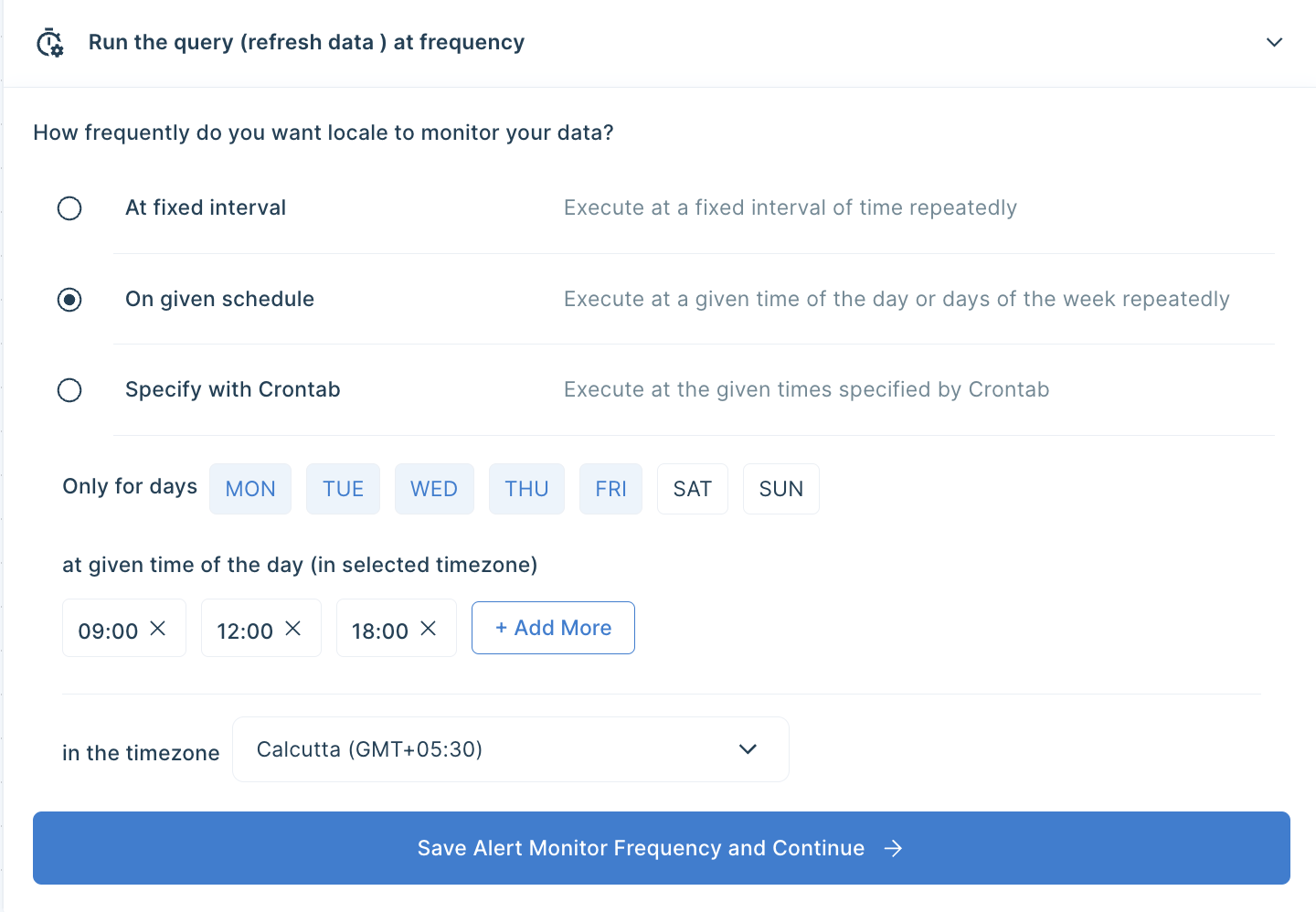

Now Setup Alert Frequency based on your requirement, this would automatically run the query based on the frequency you set.

Finally, you can configure webhook trigger. Go to the Setup Notification section and click on Add Notification then select Webhook. Fill in your API point. Here you can either select Default or can select Custom to customize the result as per your requirements.

Save the alert, it will automatically run itself on the pre-set schedule and trigger a webhook.

Conclusion

Now, you have two methods to send webhooks from SQL triggers. The first, the manual method, requires a good understanding of SQL, PostgreSQL triggers, and asynchronous programming in Python. It also necessitates the management of a Python service that listens for database notifications and sends webhooks accordingly. It also requires significant setup and ongoing maintenance which can be prone to errors, and troubleshooting issues would be time-consuming.

Sending webhooks from SQL query triggers doesn’t have to be an intricate puzzle. Locale simplifies the complex task allowing you to do this with minimal setup so that you can focus on other important tasks. It provide you with the comprehensive option to choose a schedule based on your requirement to run the query. Moreover, if you want to expand sending reports/data to other destinations like Gmail, Whatsapp, MS Teams etc, you get to do that with 100+ integration options.

Want to try it out yourself right now? Reserve your spot by talking to us.