Automate Sending Triggers and Reports to Email from BigQuery

.png)

TL;DR

This blog details two methods for automating the sending of triggers and reports via email from BigQuery:

Method #1: Manually Using Custom Python Script:

- Configure SMTP server settings in Python.

- Custom Python script to execute SQL query & send results via email.

- Schedule the script to run using cron jobs or Task Scheduler.

Method #2: Using Locale for Email Alerts:

- Connect your BigQuery database to Locale.

- Create and run SQL queries to monitor data.

- Set up schedules which will send email.

Method #1 involves manual scripting and potential complexities, Method #2 offers a simpler, no-code solution through Locale.ai allowing for easy automation of sending emails from BigQuery without any setup or maintenance.

Operations teams closely and frequently monitor essential metrics like daily sales performance, inventory levels, and issue tracking. The manual tracking of these metrics is not only time-consuming but also prone to errors. To proactively address potential issues, teams can employ automated alerts that notify the appropriate individuals. These alerts not only ensure timely awareness but also provide vital information for swift issue resolution. These alert triggers are adaptable and can be configured on any database or data warehouse.

In this comprehensive guide for BigQuery users, we will demonstrate the process of automating triggers to send Emails from BigQuery.

- Method #1: Manually Using Custom Python Script

- Method #2: Using Locale for Email Alerts

Method #1: Manually Using Custom Python Script

Let's explore how this can be achieved by fetching sales data from Google BigQuery and sending emails using Python.

Authenticate BigQuery

First, authenticate your access to BigQuery:

1. Create a service account in the Google Cloud Platform (GCP).

2. Download the JSON key file for this account.

3. Set an environment variable for your key file:

Setting Up Email Configuration

Configure your SMTP server in Python for sending emails. This involves setting up SMTP host, port, and credentials.

Python Script for Query Execution and Email Dispatch

This script connects to BigQuery, executes a SQL query, and sends an email with the results:

Replace the placeholders with your SMTP details, email addresses, and BigQuery query. This script will send an email with the query results.

Schedule the Script in Crontab:

- Open a terminal or command line interface. Enter crontab -e to edit the crontab file. This opens the crontab file in a text editor.

- In the crontab file, add a line that specifies when and how often you want the script to run. The format is:Here, each asterisk represents a time unit: minute, hour, day of the month, month, and day of the week, respectively. For example, to run the script every day at 9 AM, you would use:

- Ensure /path/to/python is the full path to the Python interpreter and /path/to/send_sql_results.py is the full path to your Python script.

- Run crontab -l to list all cron jobs and verify that your new job is correctly listed.

Note: The cron utility is typically available on Linux and Unix-like systems. If you are using a Windows server, use the Task Scheduler instead. Also, remember that the environment in which cron jobs run usually differs from your regular user environment, so ensure that all necessary environment variables and paths are correctly set in the script or within the crontab.

Limitations and Considerations

While this method is effective, it comes with some limitations:

- Maintenance: The script needs regular updates and checks.

- Error Handling: More sophisticated error handling may be required for production use.

- Server Limitations: Depending on your SMTP server, you might face limitations on the number of emails sent per day or other restrictions.

Method #2: Using Locale for Email Alerts

Locale, no-code platfrom handles the heavy lifting, providing a maintenance-free, reliable, and user-friendly experience. With just a few clicks, you can automate alert triggers to email.

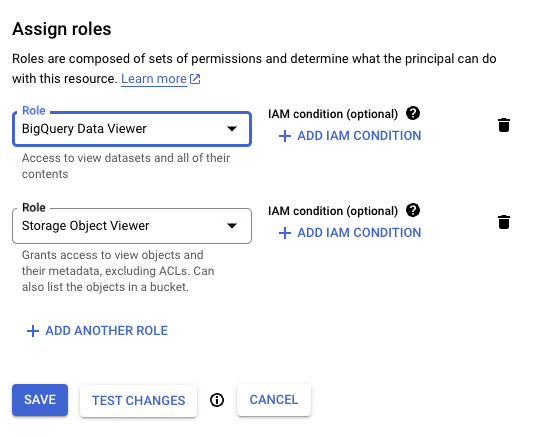

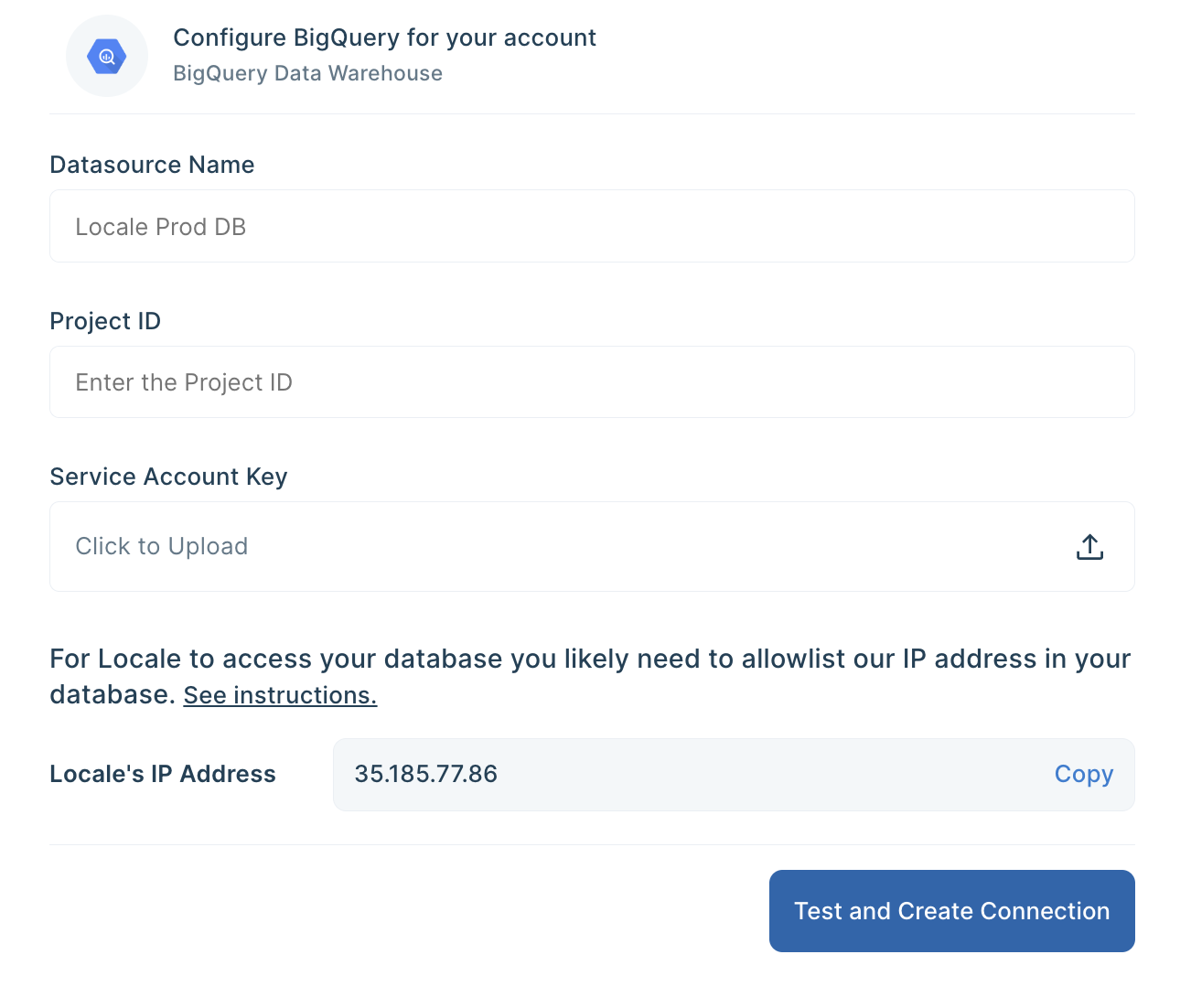

Step 1: Login to Locale, Go to Org Setting and Connect your BigQuery database from the data source.

Step 2: Now go to Alerts → Create New Alerts. Choose your database → Click on Setup data to be monitored.

Step 3: Write your own SQL Query and click Run to verify the data. Once you are satisfied, click Done.

Step 4: Now Setup your schedule based on your requirement, this would automatically run the query based on the frequency you set. Click on Run the query at frequency and choose your schedule

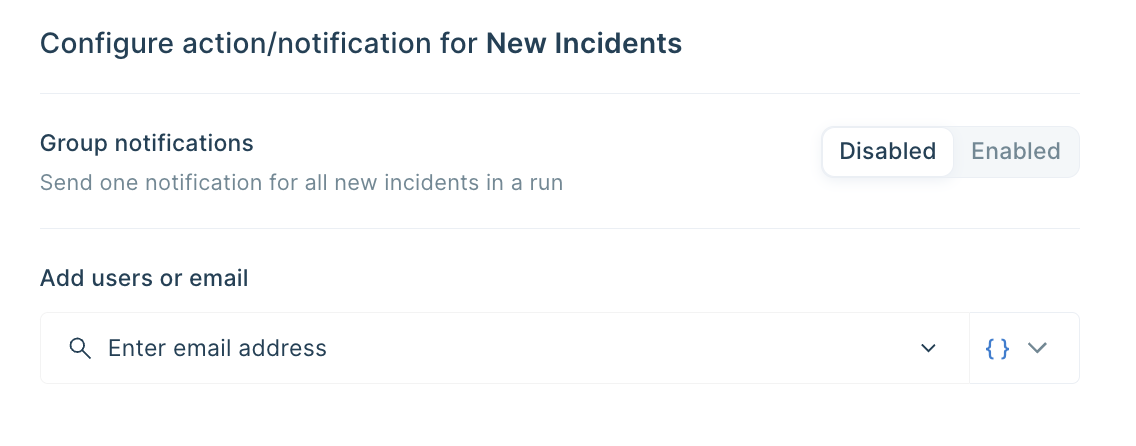

Step 6: Final Step, you can set up whom the email should be sent. You can click on Add Notification/action from the Setup Notification/Action section. Now click on Email Subscribers and add all the email addresses to whom you want to send emails.

You can also dynamically send emails based on SQL Query result by selecting from { } icon

Save the alert. It will automatically run itself on the pre-set schedule and trigger a Slack notification.

Conclusion

Automating emails helps your team to be notified on their preferred channel so that they can quickly respond to changes or issues. In this detailed guide, we looked at two methods that can help you automate emails from the BigQuery database. Although Method #1, through Python Script, is a viable option, it can be complex and error-prone to implement, especially if you need to handle complex notification scenarios.

Locale’s no-code platform makes it easy to automate email alerts from your BigQuery database with minimal one-time setup. Locale helps you to:

- Easy automation of triggers to Email

- Choose a schedule for sending notifications based on your requirements.

- Dynamically choose people to send emails based on the query result allowing alerts only to the person it needs.

- Group the notifications ensuring less noise to the users.

- Set up and provide a clear playbook for users to follow in case of any issue so that it can be resolved efficiently.

- Set up an escalation rule to ensure issues are being resolved.

- Moreover, if you want to expand sending reports/data to other destinations like Slack, WhatsApp, MS Teams, etc, you get to do that with 100+ integration options.

If you are looking for a simple and reliable way to automate triggers to email from your BigQuery Database, get early access to Locale, book a call with us.